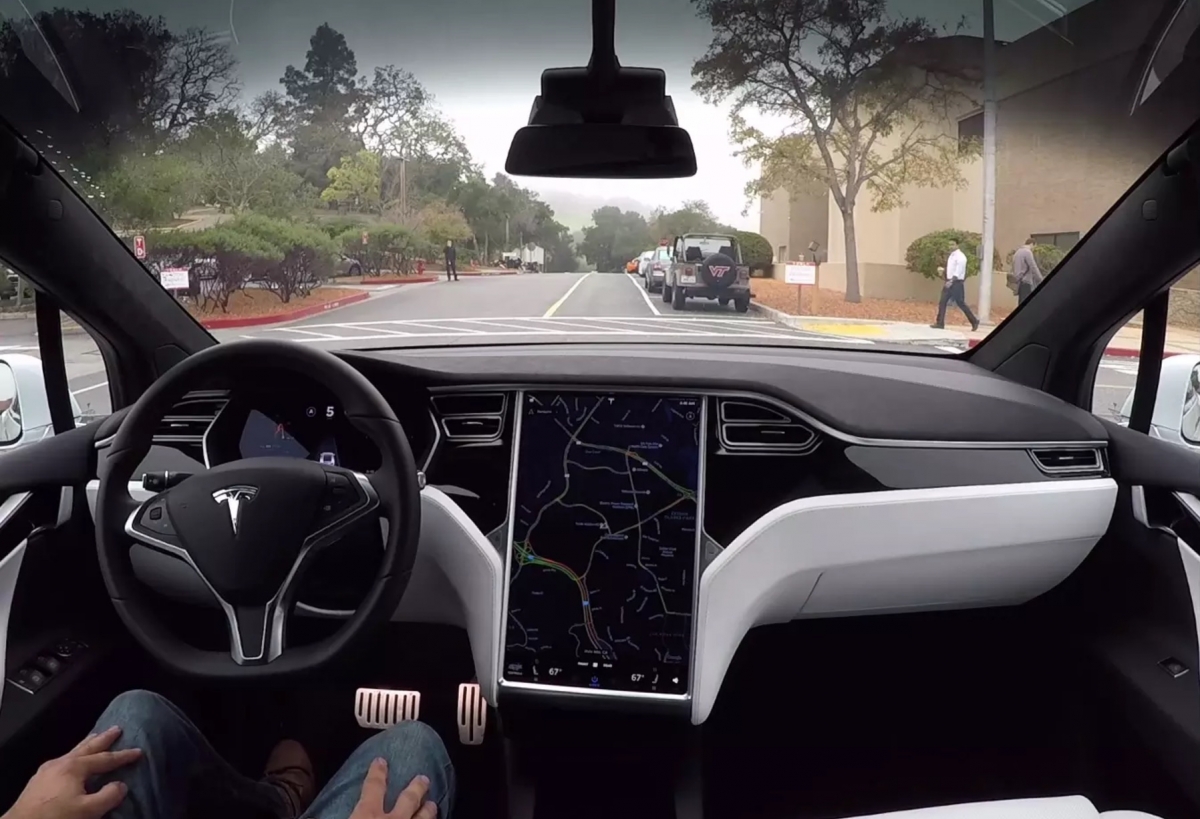

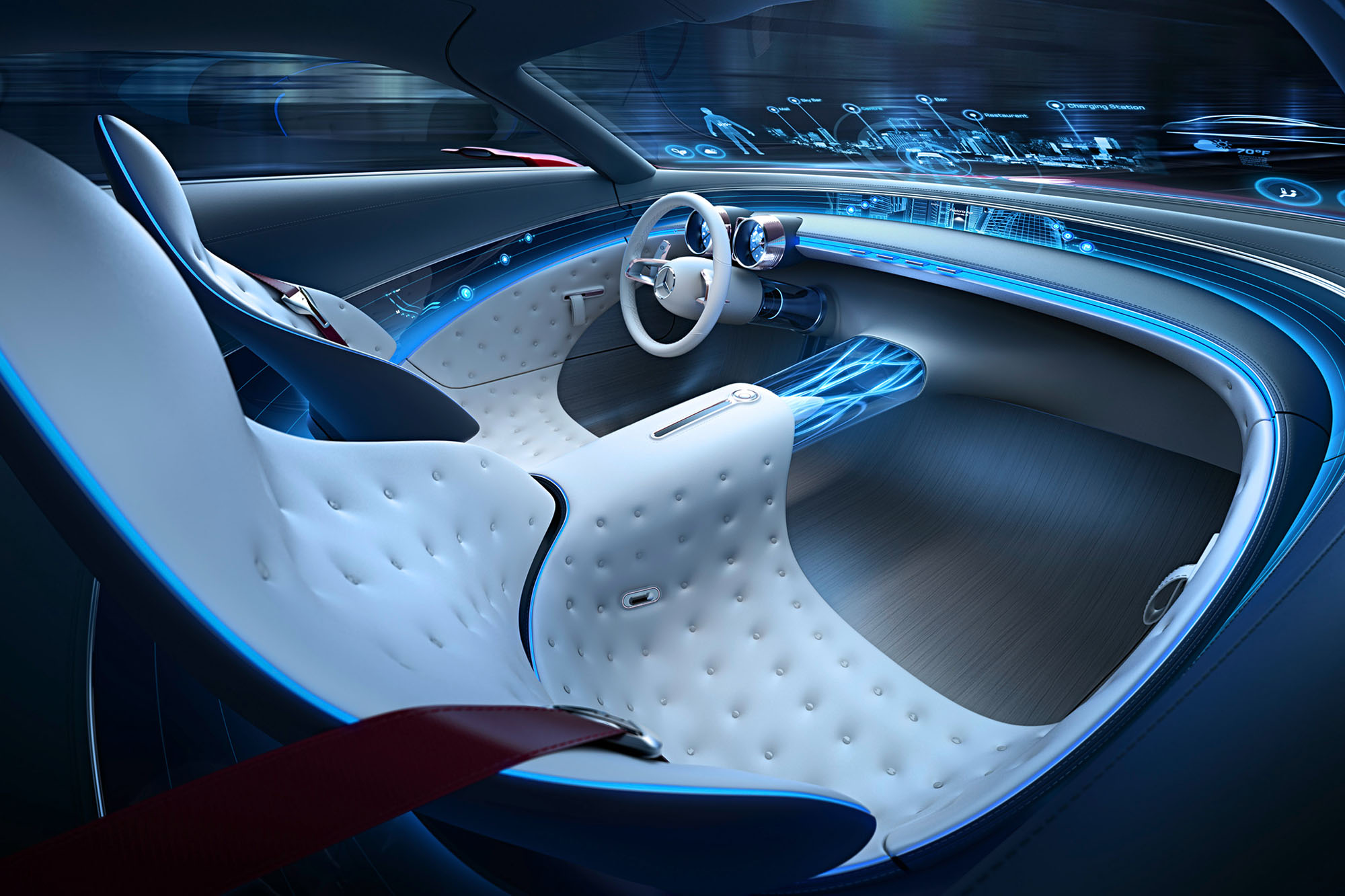

Remember the television show of the 80s called Knight Rider? If yes, I’m sure you are picturing the uber-cool black colored Pontiac Firebird with the blinking red lights. The show was a hit, mostly thanks to the futuristic feature-packed beast of a car that assisted its undercover-cop owner in solving crimes. The car could self-drive, follow voice commands, make decisions and so much more. In today’s times, big players like Tesla Motors, Mercedes-Benz and Audi are working strongly to make cars smarter by the day, and we can see the Knight Rider car become a reality. Equipped with various sensors and complex electronic systems, cars can assist drivers with near self-driving capability. The responsibility of making decisions for safety and navigation is shifting from the driver to the artificially intelligent systems. Easy integration of software such as Android Auto and Apple CarPlay with the in-car systems allows adding the ‘Smart’ factor to almost any car. With this rapidly changing landscape where consumers are being exposed to smarter vehicles, several challenges arise, many of which can be tackled by User Experience techniques.

UX Challenges in Smart Cars

Driver Distraction

Infotainment systems in cars are extremely useful for viewing and controlling various settings and features as well as managing the entertainment systems. However, driver interaction with these systems can be a source of distraction. Wired connectivity of the infotainment unit with personal devices can also cause physical obstruction in some cases, not to mention the painful process of managing cables and finding difficult to access connection ports. These issues can not only be frustrating for the driver but can also cause obstruction or distractions that could lead to accidents (Reuters, Car Dashboards that act like smartphones raise safety issues, July 2015).

Trust & Confidence

With advanced systems on board, cars these days can predict collision scenarios and detect pedestrians among various other intelligent actions. When the car makes several of the decisions for you, it is quite possible that a lack of trust or confidence could creep in (Mashable, Self-Driving Cars Still Need to Earn the Public’s Trust, Oct 2017). Questions like “What if the system doesn’t work?” and “What if there is a malfunction?” are quite common when users first experience smart cars.

Perceived Safety

Drivers or passengers can develop high levels of perceived safety due to the powerful and promising capabilities of smart cars. While it is a good idea to make people feel safe, it can also lead to negative results, such as drivers relying completely on the onboard systems and not paying sufficient attention during dangerous situations (Auto-ui.org, User Experience Design for Vehicles, 2012)

Driver Emotion

The driver’s emotional state is an important issue for automotive safety. Emotions affect perception and action, and hence, they are extremely relevant. Several driving behaviors are negatively affected by emotions, linking stress, anger or aggression to accidents. Frustrations arising due to poor usability of in-car interfaces and controls or difficulty navigating can also contribute heavily to a negative emotional state (Auto-ui.org, User Experience Design for Vehicles, 2012).

How UX Design is Making Smart Cars Better

User (Driver) Research

There are many contexts possible for a driver, be it a slippery drive on the rainy roads of Portland or a foggy encounter on the crests and troughs of San Francisco. If these contexts are not kept in mind while designing for smart cars, there is a high chance for the design to fail, and failure in a driving scenario could have terrible consequences. Research is hence essential, where UX Practitioners observe and interview people driving in real-world scenarios, while unveiling their pain points and understanding their behavior (Key Lime Interactive, User Research Methods for Automotive User Experience, Oct 2015).

Google, for example, conducts its research by simulating real-world scenarios and combining it with special eye-tracking sensors to understand how drivers interact with Android Auto (Android Central, This is how Google Tests the Safety of Android Auto, 2016).

Augmented Reality (AR)

Many companies these days are working on technologies and techniques to augment reality for the drivers to prevent distractions and instill a sense of confidence. This is often achieved by creating overlaying interfaces that either appear directly on the windscreen of the vehicle or appear on top of a real-time view of the environment around the car displayed on the infotainment screens. The interface elements are intentionally made translucent, allowing good visibility of the environment with minimal obstruction. UX designers make use of typography, design principles and user research to create and refine the design of the overlaying interfaces for clarity and understandability (CNET – Roadshow, Augmented Reality in the Car Steps Towards Production at CES 2017, Jan 2017).

Visualizations and graphical representations are heavily used to allow quicker recognition through peripheral vision, eliminating the need to fixate on the interface rather than on the road. These techniques also allow giving users timely feedback for any decisions made by the car’s artificial intelligence and displaying information about pre-determined decisions beforehand. This allows building trust with the user, as it eliminates the element of surprise when the car makes a decision.

An example of AR in vehicles is Harman’s LIVS concept showcased at CES 2017, which is capable of placing street signs over intersections, greatly helping drivers (Harman, HARMAN Unveils the Ultimate In-Car Experience for Intelligent, Intuitive Autonomous Driving at CES 2017, 2017).

Voice Commands & Gestures

Gestures and voice commands are powerful ways to interact with smart cars. They reduce hand-eye coordination efforts and the need for haptic feedback while using touchscreen interfaces or other controls of the car. With devices such as Google Home and Amazon Alexa in the market and assistants like Siri and Google Assistant on almost every smartphone out there, consumers are getting more comfortable with using voice commands as time passes. Gestures are like second nature to humans and it is a great idea to leverage this human trait for interaction with smart cars (Medium, Reimagining In-Car UX, Sep 2014).

For instance, BMW allows drivers to use gestures to accept in-car phone calls and control the music volume. Even several voice commands exist to perform vital tasks that drivers are highly likely to perform, such as navigation (Medium, 5 Car UX Design Trends, Aug 2015) (Carnation Software, BMW Owner’s Manual).

UX Designers incorporate these interaction methods to reduce cognitive load on the drivers and allowing a more natural and usable way to perform actions and increase driver confidence. Maybe one day we can finally say goodbye to blindly and clumsily hunting for knobs in some corner of the car.

Conclusion

Many significant advances in the merged automotive and digital fields have been made and will continue well into the foreseeable future. Consumers have also become more immersed in the digital landscape, and their needs and demands are advancing exponentially. With increasing competition and easier access to advanced technology, it is essential for manufacturers to ensure top-notch in-car UX to maintain their position in the market. We might still be a long way from a time when every vehicle would be self-driven with the most advanced and intelligent systems on board, but it is quite intriguing to imagine new ways humans would interact with these marvels and how UX professionals would carve the in-car experience.