What is Pure Methodology and why is it important?

While interning with Techsmith this summer, I got the opportunity to participate in a usability evaluation method called Pure (Pragmatic Usability Rating by Experts) Methodology. This was my first experience participating in PURE usability evaluation method and found the method to be very productive and easy to accomplish.

According to Nielsen Norman Group, “PURE is a usability-evaluation method in which usability experts assign one or more quantitative ratings to a design based on a set of criteria and then combine all these ratings into a final score and easy-to-understand visual representation.”

Benefits

Quantitative and Qualitative Data: As a UX professional, it is important to communicate findings from your research/testing in a way that can be interpreted by your team and other stakeholders involved. There is a constant need of quantitative data to represent your results/findings. As the results from PURE methodology are in the form of ratings/scores/numbers, it becomes relatively easy to inform the results to different stakeholders who may not understand the qualitative data easily, especially business.

Requires less Time and Resources: You don’t need to involve real users in this process, as its an analytical usability evaluation method. Definitely, its not a replacement for usability testing, but it is a great method to evaluate your application in different phases of design/testing or evaluate your competitors.

What do you need for PURE?

Usability Experts: To perform this method, the panel should consist of at least three usability experts.

While conducting PURE method, our panel consisted of designers as well as researchers. Also, we had more than 3 experts that evaluated the intended applications. So, you can have designers included in this process as well, but they require prior understanding of user centered design.

A Facilitator: The facilitator is responsible to keep track of time, organize meetings, create and manage required documents (ScoreCard/ScoreSheet) and ensure that everyone is adhering to the predefined target audience.

Target User/Persona: It is necessary to “know your audience” well before you proceed with PURE method.In this method, focusing on a Persona or an Empathy Map would be truly beneficial as you could revisit your target user as per requirement, in turn keeping evaluators, the facilitator and all associated stakeholders on the same page.

How to do it?

Identify Target User: The target user type can be decided by the product manager, lead designer or the expert panel. I think its highly important to consider a specific persona/target audience so that it would assist expert panel during the evaluation process.

Identify the Main Tasks: You need to identify the key/critical task for the persona/empathy map selected. When you are selecting the Tasks, its important to consider the business context for the product as well. Example-‘Buy Tickets’. Nielsen Norman Group recommends “to keep the number of tasks close to 10, and no more than 20, at least when you first start using this method”. We had in all 7 tasks defined across two applications(our competitors), while running PURE for the first time. For example: Installing an application, Purchase Items.

Determine Happy Paths: There could be many ways a task could be accomplished. The Happy Path is the most desired path the target audience would take to accomplish a task. Evaluating and improving the happy path would be an effective way to make the task simpler for users. In our PURE process, a senior researcher and designer collaborated to determine all the fundamental tasks and their happy paths.

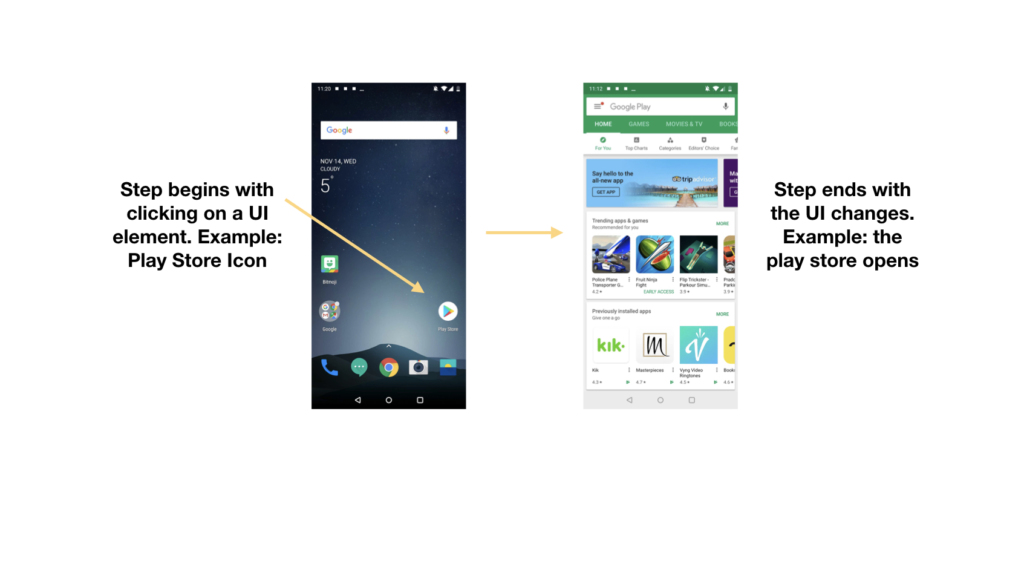

Step boundaries for each task Break the happy path into small steps. A step begins when the system presents the user with a set of options and is waiting for user input to proceed. Micro interactions need not be considered as a separate step.

Individual Rating by Expert Panel

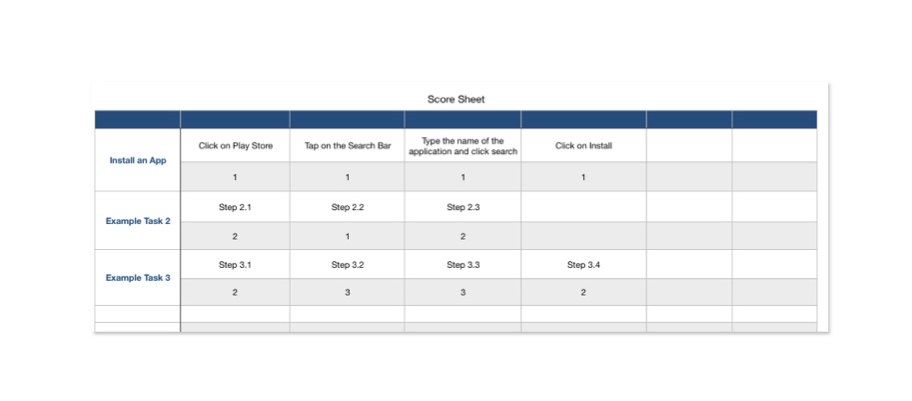

The facilitator needs to create a document for evaluators to enter ratings for all steps of each task. This document is referred as the Score Sheet. In this sheet, the facilitator lists all predefined Tasks and Steps involved in each task.

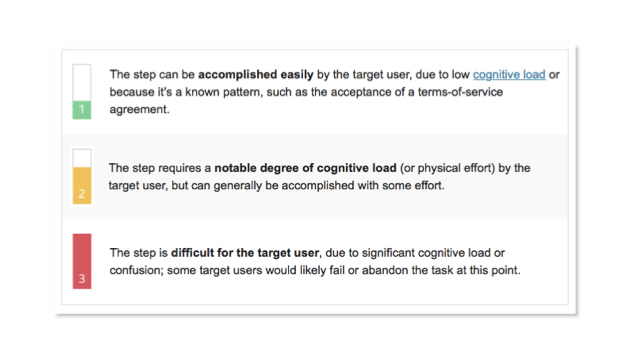

Then the evaluators would rate each step individually (1, 2 or 3) depending upon the following criteria:

Calculate inter rater reliability score

The facilitator would now collect the individual scores. To understand the friction among the evaluators ratings, the facilitator uses the scores to calculate inter rater reliability score.

Final Scores

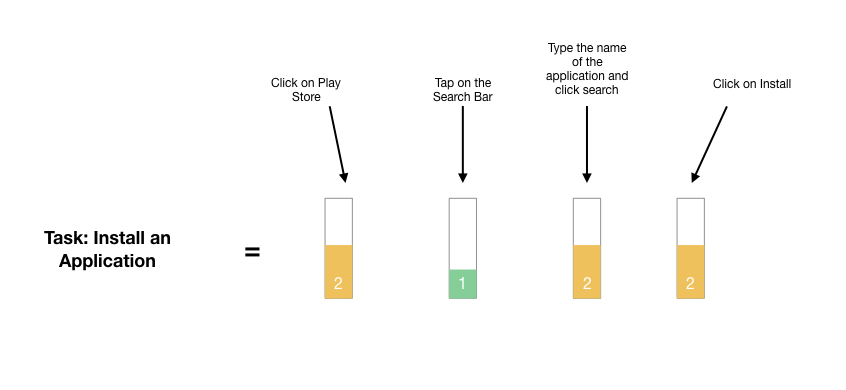

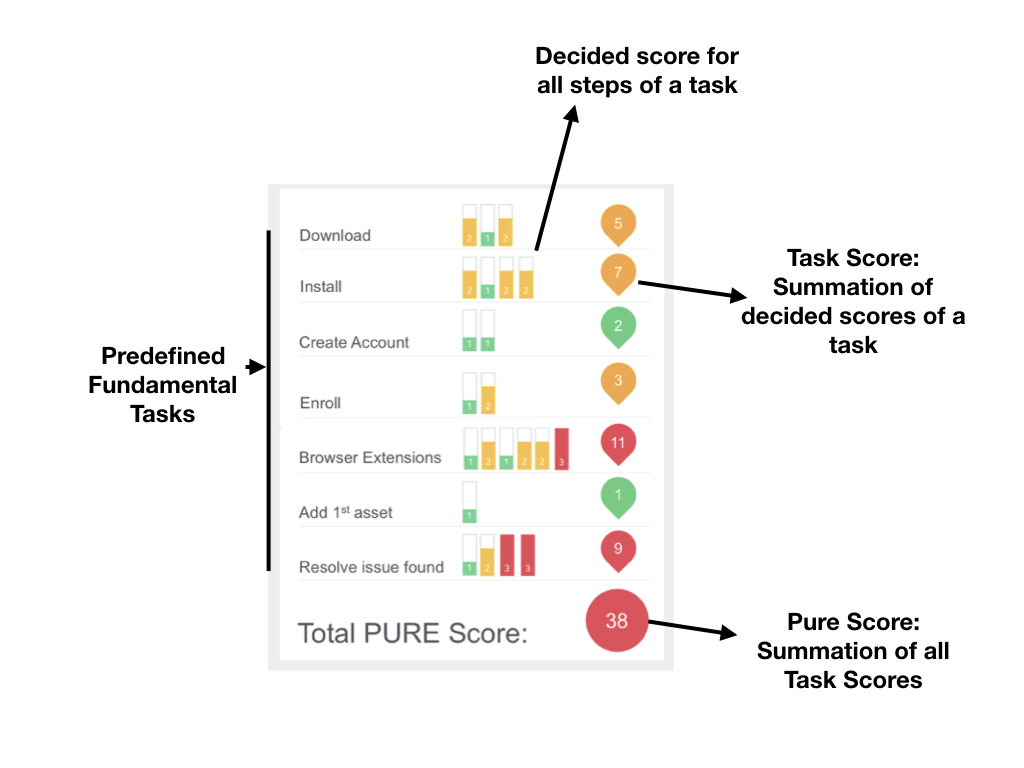

Now, the expert panel would walkthrough all the steps of each task and discuss about it. The discussion would help in understanding the perspective and reasoning behind the scores allocated by other evaluators in the panel and help to decide a single score for each task. The decided score is then considered as the PURE Score for the respective step. However, we twisted this process a little bit while performing PURE. Instead of deciding a single score, the evaluators had the freedom to change their scores after understanding the perspective of other expert raters. The facilitator then calculated the average of scores given by all the evaluators for each step and rounded of to the nearest whole number, which acted as the ‘Decided Score’.

ScoreCard: All the decided scores are incorporated onto a single sheet called Scorecard. Scores are summed for each task and depicted on the right of the respective tasks which can be referred as Task Score. All Task Scores are summed to calculate the final score for the application.

Any Improvements?

Multiple Happy Paths: Instead of sticking to just one happy path for each task, it would be great if multiple happy paths for a single task could be evaluated. When we were reflecting on our PURE process, it was also found that the evaluators were interested to discuss together and determine different happy paths for each task.

More than 3 point Scale: When we were assigning ratings to all steps of each task, it was really difficult to determine a score when we had only a 3 pointer scale to use. Additionally, we were constrained to provide ratings on the basis of ‘Cognitive Load’, leading us to disregard few other usability issues that we encountered during our evaluation process. It would be interesting to have at least a 5 pointer scale to assign ratings that considers other parameters to make the evaluation more inclusive and comprehensive.

Conclusion

Finally, I would say that Pure methodology is an impressive evaluation method, providing results in the form of qualitative as well as quantitative data. But, there are definitely few areas where the PURE methodology could be improved as discussed in the article, but its still in its nascent phase. It would be interesting to see how PURE would grow in next few years. Overall, It is a good answer to the question ‘Give me the numbers’.